|

The beauty of this is that it can be run as a docker container while also reporting stats for the host system. What it does is collect system metrics like cpu/memory/storage usage and then it exports it for Prometheus to scrape. One such exporter is node-exporter, another piece of the puzzle provided as part of Prometheus. As described above, these targets need to export metric in the prometheus format. The main point is to monitor other things by adding targets to the scrape_configs section in prometheus.yml. While it’s certainly a good idea to monitor the monitoring service itself, this is just going to be an additional aspect of the set-up. The raw metrics can be inspected by visiting Adding a node-exporter target In other words, the Prometheus server comes with a metrics endpoint - or exporter, as we called it above - which reports stats for the Prometheus server itself. This corresponds to the scrape_configs setting by the same job_name and is a source of metrics provided by Prometheus. Visit to confirm the server is running and the configuration is the one we provided.įurther down below the ‘Configuration’ on the status page you will find a section ‘Targets’ which lists a ‘prometheus’ endpoint. To launch prometheus, run the command docker-compose up '-config.file=/etc/prometheus/prometheus.yml'Īnd a prometheus configuration file prometheus.yml: # prometheus.ymlĪs you can see, inside docker-compose.yml we map the prometheus config file into the container as a volume and add a -config.file parameter to the command pointing to this file. prometheus.yml:/etc/prometheus/prometheus.yml We’ll start off by launching Prometheus via a very simple docker-compose.yml configuration file # docker-compose.yml This is useful for scenarios where pull is not appropriate or feasible (for example short lived processes). Note that it is also possible to set up a push-gateway which is essentially an intermediary push target which Prometheus can then scrape. What this means is that this is a pull set-up. Exporters are http endpoints which expose ‘prometheus metrics’ for scraping by the Prometheus server.So while Prometheus collects stats and raises alerts it is completely agnostic of where these alerts should be displayed. Alertmanager manages the routing of alerts which Prometheus raises to various different channels like email, pagers, slack - and so on.While Prometheus exposes some of its internals like settings and the stats it gathers via basic web front-ends, it delegates the heavy lifting of proper graphical displays and dashboards to Grafana. Prometheus - this is the central piece, it contains the time series database and the logic of scraping stats from exporters (see below) as well as alerts.While this may be a bit more complicated to set up and manage on the surface, thanks to docker-compose it is actually quite easy to bundle everything up as a single service again with only one service definition file and (in our example) three configuration files.īefore we dive in, here’s a brief run-down of the components and what they do: As such, it consists of a few moving parts that are launched and configured separately. Prometheus is a system originally developed by SoundCloud as part of a move towards a microservice architecture. Note that older versions will very likely work as well, but I have not tested it. The versions used are docker 1.11 and docker-compose 1.7. In order to follow along, you will need only two thingsįollow the links for installation instructions.

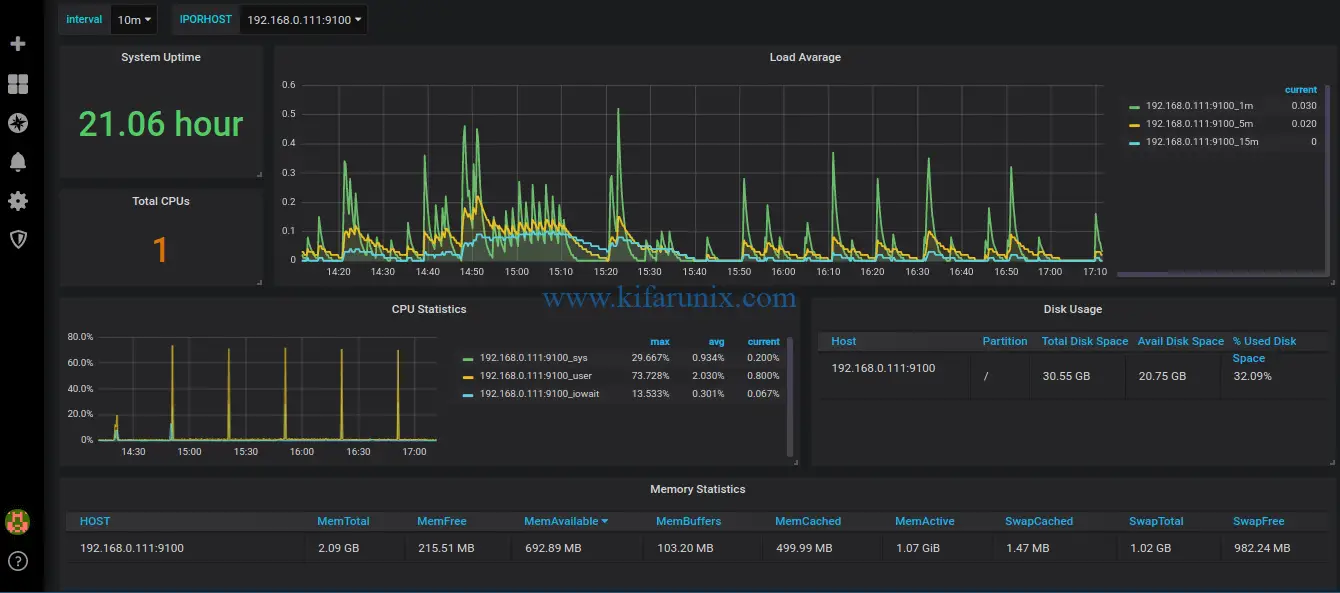

This covers the as-of-writing current versions of Prometheus (0.18.0) and Grafana (3.0.1).īecause pictures are worth more than a thousand words, here’s what a Prometheus powered Grafana dashboard looks like: While the set-up went fairly smoothly I did find some of the information on the web for similar set-ups slightly outdated and wanted to pull everything together in one place as a reference. I set it up for a trial run and it fit my needs perfectly. With those requirements in hand I soon came across Prometheus, a monitoring system and time series database, with its de-facto graphical front-end Grafana.

What I really needed was something lean I could spin up in a docker container and then ‘grow’ by extending the configuration or adding components as and when my needs change. When I recently needed to set up a monitoring system for a handful of servers, it became clear that many of the go-to solutions like Nagios, Sensu, New Relic would be either too heavy or too expensive – or both. The choice of monitoring systems out there is overwhelming.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed